Making AI real with the Groq LPU inference engine

The current options for deploying GenAI are slow and expensive, and cannot support real-time inference, including running AI at conversational speeds. However, Groq's LPU inference engine offers a fundamentally new type of inference solution, with a performance speed that is 10 times faster than standard models. Join this session to learn how Groq's innovative approach opens the door to a new class of real-time AI solutions that will transform organisations and solve big challenges.

Speakers

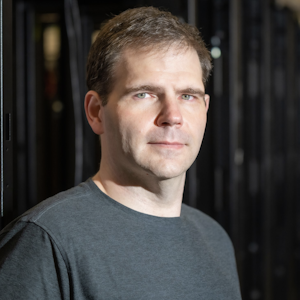

Jonathan Ross

Founder & CEOGroq

Mohamed Taha

Senior CorrespondentBBCTopics

AI and machine learningDesignHardware and robotics